Empower Teachers with AI

Built for Education. Driven by real data.

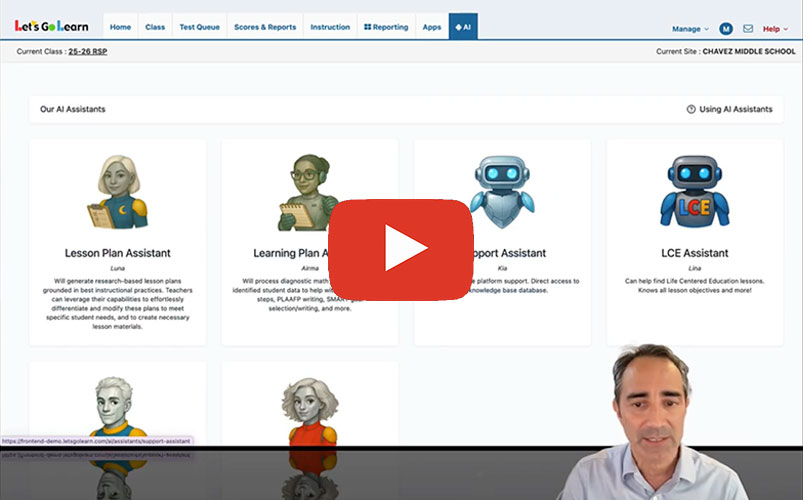

AI Assistants

In this quick demo, watch how our AI assistant, Airma, instantly generates a PLAAFP and a SMART goal — right inside the Let’s Go Learn platform. Perfect for IEPs, faster documentation, and more personalized support for students. Let’s Go Learn’s AI Assistants leverage our high-quality data to dramatically save time while improving accuracy and compliance.

- Built for teachers and specialists

- Saves time on data evaluation and paperwork

- Hallucination-free

- FERPA-compliant

Meet Luna, the Lesson Plan Assistant

See how Luna helps teachers analyze student lessons, identify learning gaps, and provide personalized next steps — all in real time. Whether you’re a classroom teacher, intervention specialist, or administrator, Luna supports you with actionable insights instantly.

- No extra cost – Luna is available to all Let’s Go Learn users

- Generate AI-empowered lesson plans for students based on their data

- Save time and support every learner more effectively

Let Luna do the heavy lifting so you can focus on what matters most — teaching!

Luna will generate research-based lesson plans grounded in best instructional practices. Teachers can leverage their capabilities to effortlessly differentiate and modify these plans to meet specific student needs, and to create necessary lesson materials.