Moving toward District-Wide K-12 Differentiated Testing Articulation

The Need for Educational Testing

A key challenge for K-12 education is balancing the need for testing with the time it takes away from instruction. Testing needs for district administrators are often at odds with the needs of special education and intervention departments. At the broadest level, district administrators want to have their fingers on the pulse of their schools so they can allocate resources to help with struggling schools. At the most detailed individual level, special education and intervention departments need specific data so they can develop personalized learning plans for each student. Testing articulation planning is a best practice which allows K-12 districts to provide ideal differentiated testing so that assessment administrators know which students are taking which test. This helps districts avoid the trap of testing all students the same way, and improves student outcomes.

The Issues

A Devastating Cost for Not Using

Granular Diagnostic Assessments

If the district administrator elects not to purchase a diagnostic assessment for the special education and intervention departments, there will be a devastating result. What are the real costs of not purchasing a granular diagnostic assessment?

- Student outcomes are reduced because diagnostic assessments contribute to better IEPs and specially designed instruction (SDI) for students with IEPs. Without these tools, teachers will be making guesstimates not based on data.

- Legal costs and lawsuits increase when legal compliance for students is not met. The analysis and reporting of diagnostic assessments set the milestones for progress monitoring.

The Solution: A Flexible Approach to Assessment Articulation

Articulation addresses how a district rolls out testing across student populations and grade levels. There are two challenges to effective articulation:

- If students with IEPs are in general education classes, a plan must be constructed to determine which students get the diagnostic assessments.

- If all students are not tested with the broad-based assessment, school-wide state testing predictive data will be skewed.

Fortunately, there are sound solutions to these challenges.

Solution 1: Use existing procedural frameworks for accommodations.

Implement flexible in-class testing by using existing frameworks.

- For example, students with dyslexia or ADHD may already have been given extra time for testing. This provides the procedure for differentiation. These students could start at a different time or have a separate room so they are not interrupted by students ending their testing sooner.

Prepare lists ahead of time that describe which test each student will take along with the pertinent information, such as timing or limitations.

- The test admin can then read administration scripts for all assessments, one for students about to take MAP-m and one for students about to take ADAM (LGL’s math diagnostic). They can also explain in advance the completion times for the appropriate group and then remind students when time is up: “Group A, please stop now and quietly do your after-test activity.”

Solution 2: Use an adjusted predictive proficiency score to avoid skewing the predictiveness of tests.

An adjusted predictive proficiency score will prevent skewing of a test’s ability to predict future performance.

Here’s how to develop an adjusted predictive proficiency score. Don’t worry–we have a TOOL For you that will let you calculate the score automatically.

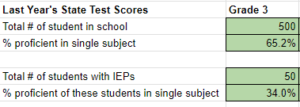

Step 1: Collect your district’s data for each grade: Green cells shown below.

Step 2: Find the gross number of “students without IEPs” (Gen Ed) who were proficient.

(500 x 0.652) – (50 x 0.34) = 309

Step 3: Find the number of general education students (500 – 50 = 450)

Step 4: Divide these two numbers from Step 2 and 3 to get your proficient rate of general education only students: 68.67%

Step 5: Divide the all student proficiency rate by the general education only student proficiency rate.

( 0.652 / 0.6867 = 0.9494)

Step 6: When you start testing only general education students multiply these results by this adjustment rate to get your adjusted proficiency rate that will be predictive to your state standards proficiency rate which is given to all students.

Moving towards differentiated testing requires a bit of change, but the advantages for all students are clear. Frontrunner districts have already pioneered the path to follow and have come up with best-practices such as those mentioned in this blog.

Leave A Comment